Reporting inappropriate content on X (formerly Twitter), and avoiding writing inappropriate content

Protecting the platform against hateful posters, scammers, and spammers

Note: this draft began far before a number of changes at X (formerly Twitter, which I’m not re-writing to accommodate the new name of any further) occurred. Please mind that as we continue. As Elon Musk threatens to make all blocked posts visible, I must redouble my efforts to complete this guide. This guide not only serves as a way to avoid being reported, so much, as a way to identify and report bad actors. There will be many edits and additions, so reload to ensure you see the latest version.

There has been a significant rise in scams, spams, bigots, and fascists on Twitter.

This article is intended to help you recognize what content that is reportable, how to successfully report it, and how to write in a manner that avoids drawing yourself into writing a reportable yourself.

I have been wary of writing this guide and making it public. It is possible that scammers, spammers, and bigots will use this against others. However, the core of regular Twitter users are not scamming, spamming, or posting hateful content; meanwhile, scammers, spammers, fascists, and bigots are incapable of avoiding these issues; therefore, the net benefit to regular users is still there in this publication.

What content isn’t allowed on Twitter?

At the core, this is covered in detail by the Twitter Rules and elaborated upon inside the updated Twitter Hateful Conduct Policy, but there is specific content we are concerned with on this article. To summarize:

Bigoted content which attacks many protected categories including: sexual orientation, gender identity (update: Twitter says they won’t act on gender identity, but the report category exists and it is still acted upon, especially in the light of new EU DSA rules in force on 25 August 2023), race, ethnicity, caste, religion, & nation of origin.

Content attacking disabilities, diseases, or age.

Content wishing harm upon on others, for any reason.

Harassment, including sexual harassment, insults, and name-calling (yes, those are reportables, and it’s important to remember).

Wishing, threatening, or praising violence - even & especially when coded.

Incitement to mass harassment.

Violent event denial; such as the Holocaust, the Nakba, and 9/11.

Violent event promotion, such as gore images or praising fascist activities.

Spams and scams.

Misinformation in general; especially concerning politics, elections, and/or health.(Apparently Twitter no longer polices this in any way, which is a ToS violation to Google and Apple, whom have not acted.)

There’s other categories to watch for, but if you want a Top 10, there you are.

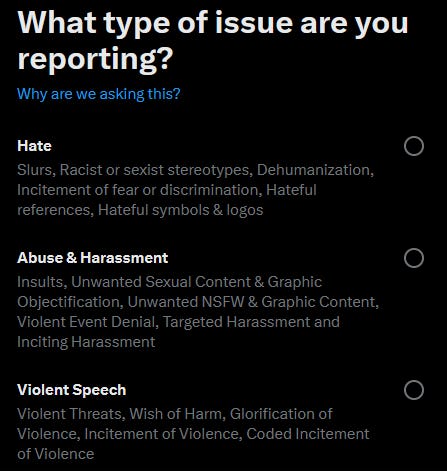

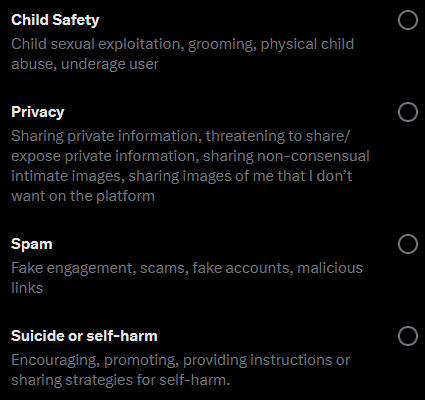

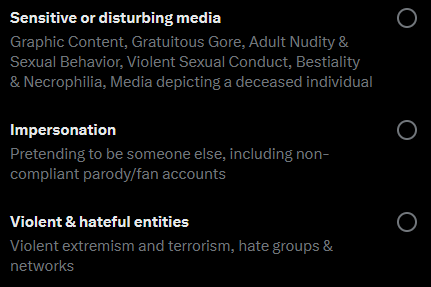

How to categorize your report

Once you click the ⋮ or ⋯ menu on a post or profile to report, there are many categories that can apply:

Use your best discretion based on that criteria.

Inside here, there will be additional options: Abuse/Harassment > Attacked based on identity (that’s most of the hate speech);

Abuse/Harassment > harassed or intimidated with violence (that’s threats, insults, and name-calling - yes, name-calling and insults are a reportable on Twitter, this is important);

Self-harm (encouraging it, giving information to do it, suggesting it, and so forth);

Spam (this is also where scams go), user impersonation, and a few others that should be quite clear from the main categories.

Under some report options, especially in concern to identity-based abuse, you will need to provide additional information. If you’re not sure that a category applies, don’t choose it. Accuracy in reporting is important.

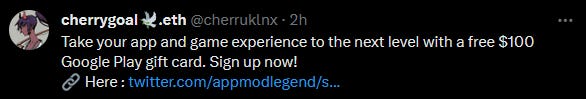

A (re)note on scammers and spammers

If you make an innocuous reply on something and get something like the photo above quickly - it may be about T-Shirts, locks/blocks/suspends/inaccessible accounts, it may be about mutual aid (I have an article about that already), but you’ll know it by the reply coming fast and aggressively. If that happens, please do the following:

Open their profile in a new tab (this is easier on desktop).

Report that reply as Spam/Posting misleading or deceptive links leading to scams phishing or other malicious links

Go thru their profile, for every try they do (at least 5 of them), hit Spam/Posting misleading or deceptive links leading to scams phishing or other malicious links.

Since Twitter refuses to monitor proactively for this spam (despite having mechanisms used against the left) or any other generally hateful content, it’s on the rest of us to report it and demand a timely response (this should be an Apple and Google violation of Terms of Service, but they’ve declined to enforce it).

How do I write posts and replies in a way that also avoids getting reported?

Now, we get to the easy part! Very, very, easy.

Since trolls, bots, bigots, and fascists cannot help themselves from writing this way, you can avoid it yourself very easily.

There’s very simple steps:

Keep calm and rational. No matter how mad the troll makes you, cool off. Take a walk, have a breather, count to 10. Whatever you have to do. Nothing about social media is ever time-sensitive. You have the time you need to collect yourself.

Don’t wish harm, death, dismemberment, general hate, etc. on upon your target.

You can call out your subject’s ignorance on content matter or historical fact, without saying slurs or mentioning potential disabilities. This is how they usually report you back.

Never reply while mentioning reportable issues as a generality - such as race (when it comes to Russian citizens this includes calling them orcs - that is a reportable - heads up to NAFO trolls, that goes under Hate > Dehumanization), religion, gender or gender identity, sex or sexual preference, appearance, or national origin. This is what you’re supposed to reporting them about doing, after all. You don’t want to give them or their group chat (there’s almost always a group chat they’re talking to) a reason to mass-report you back.

Mind that insults, swearing, and/or name-calling are a reportable. Did I not mention that, importantly, this matters, far earlier inside this article, many times?

If far too many issues are mentioned in the reply compactly, in the target’s hateful content? You’re more welcome to mention logical fallacies, especially their preferred tool, the Gish gallop; select the worst of it, and address what you can within content/character limits, after the report.

Trolls, bots, bigots, and fascists are absolutely incapable of following these steps by the very nature of their content and character and intent. That’s the secret to reporting success. It’s also why I’m writing this article.

What can Twitter do?

I should have phrased this as what could Twitter do. Twitter could do a lot. Twitter simply refuses to do much.

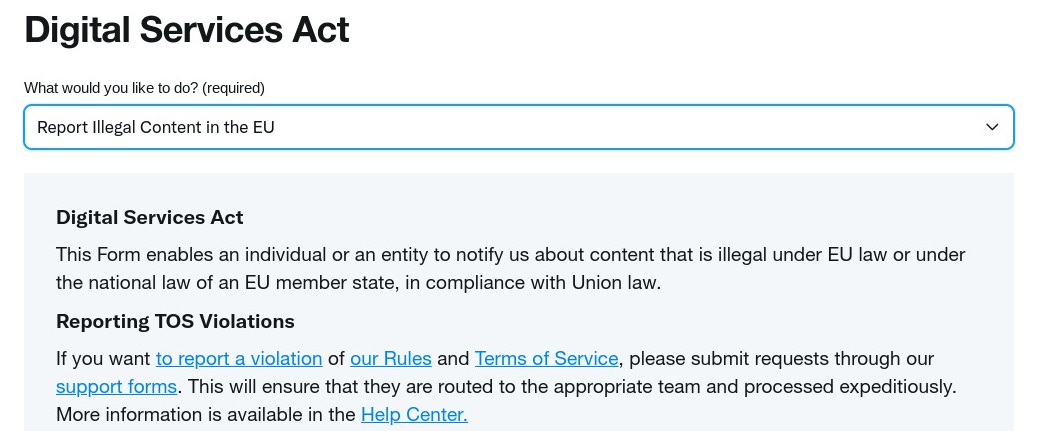

The rest of this part has been re-written, as the impending Digital Services Act from the European Union were passed and are impending on 25 August 2023, specially Section 12 which I will quote in full here:

In order to achieve the objective of ensuring a safe, predictable and trustworthy online environment, for the purpose of this Regulation the concept of ‘illegal content’ should broadly reflect the existing rules in the offline environment. In particular, the concept of ‘illegal content’ should be defined broadly to cover information relating to illegal content, products, services and activities. In particular, that concept should be understood to refer to information, irrespective of its form, that under the applicable law is either itself illegal, such as illegal hate speech or terrorist content and unlawful discriminatory content, or that the applicable rules render illegal in view of the fact that it relates to illegal activities. Illustrative examples include the sharing of images depicting child sexual abuse, the unlawful non-consensual sharing of private images, online stalking, the sale of non-compliant or counterfeit products, the sale of products or the provision of services in infringement of consumer protection law, the non-authorised use of copyright protected material, the illegal offer of accommodation services or the illegal sale of live animals. In contrast, an eyewitness video of a potential crime should not be considered to constitute illegal content, merely because it depicts an illegal act, where recording or disseminating such a video to the public is not illegal under national or Union law. In this regard, it is immaterial whether the illegality of the information or activity results from Union law or from national law that is in compliance with Union law and what the precise nature or subject matter is of the law in question.

The above mentions many things trolls attempt: hate speech quite obviously, terrorism (including especially stochastic terrorism of the sort LibsOfTikTok does and can be easily demonstrated from archives, lest someone wants to take a legal action on me and/or this article), discrimination (this includes national origin, race, and/or religion), and essentially by its nature “doxxing”.

Not only is Twitter to be required to take action on such content, it is also required by both the Apple and Google Terms of Service, to act “timely” upon reports of abusive conduct by users. At this time, Twitter does not. That will be another article later, if Twitter moderators continue to have serious delays due to serious cuts to their moderation team.

Related to that, I have submitted a detailed report to Apple about the lack of timely response to abusive content reports, and hope that either Twitter’s app is removed from their store, or that Twitter starts to take moderation seriously again.

What if I’m living or in the European Union?

Great question! You get a special moderation report that the rest of the world does not (this should be standard for everyone but by rules and regulations, the EU gets it, we don’t).

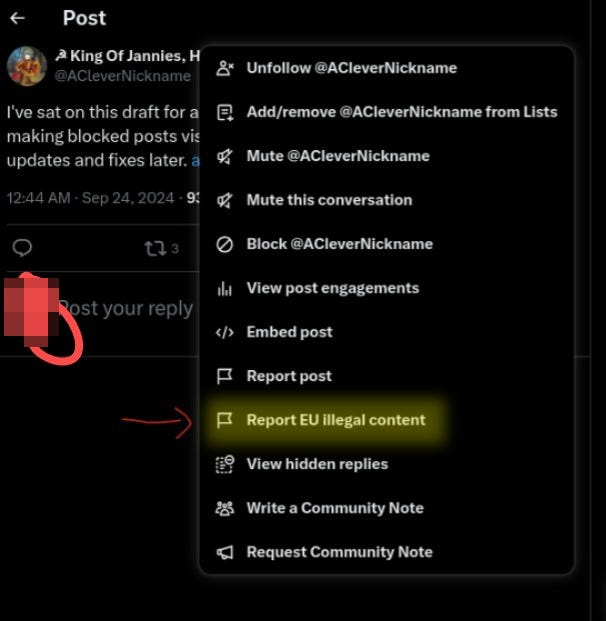

To access, you lucky pluckers follow the same step to get there, but you have an option the rest of us do not (a friend pretended to report me for this screen):

You then have slightly different options, work with what seems closest:

What difference is made by reporting?

That’s the fun part. Mass reports have been a tool of fascists for a long time, even if the reports are without merit.

We rational & unhateful people? We need to use the tools available, use them accurately, and use them often. That will make a difference for other regular users of the platform, and cultivate a healthier platform for those wanting and needing social media to make connections and improve our society.

The net gain is that the troll has to find their friends again, and lose all the bad content they have already posted. For them it’s a “spray and pray” situation. For us, bad stuff goes down and stays down.

How long until there is action taken?

It depends on the severity as seen by the few Twitter moderators still in service. I have seen reports take 3 months. I have seen reports take 3 minutes. But action will be taken. And the worst the troll, with more reports, from more people, the sooner the action is taken.

While a subscribe is appreciated, it is not needed - you can just bookmark me in your browser. I’ve done everything I can to not accept money or organize a list. I write as a free service to the community.